Table of Contents

DATE CHECKED THIS PAGE WAS VALID: 14/09/2023

So there is quite a bit to do when setting up for KVM. In the How To Setup Zramswap And Make Your PC Awesome we touch on setting up zram so that you can get more VM's but there is actually a way to get even better performance that I did not mention in that guide.

Here we will cover some additional tunables so once you have your zram setup so that you can have extra vm's running lets do a bit more so we can have even more memory available.

As like in zram the cost is more cpu cycles so on a very slow cpu that might not be desirable but if you have spare CPU there is no reason not to clear up some ram using things like zram or other kvm switches available. While zram compresses what ram is used to give more available, kvm can deduplicate what is used so that ram is only stored one time across machines.

Lets start by installing virtual machine manager for debian: (by the way if you ever need copy/paste functionality between the vm and your host just install “spice-client-gtk” and “spice-vdagent” package on the vm, then turn it off and back on).

sudo apt-get install virt-manager qemu-kvm ovmf swtpm-tools

There is a lot to install so sit back and wait a while then reboot the machine when done so it can complete.

Once back in the machine you can start enabling some tweaks. First thing is to allow passing through hardware to your VM's so you will want to enable that (as its useful for a variety of reasons) and also to check nested vm's are enabled (normally is).

Nested VM's

First thing to check is if nested vm's are allowed. You can check this by:

cat /etc/modprobe.d/kvm.conf

And you should see this line is returned: 'options kvm_intel nested=1' (for intel cpu's or 'options kvm_amd nested=1' for AMD).

On mine this was already enabled but if you are missing that file or the option first check that your system supports it by either :

cat /sys/module/kvm_intel/parameters/nested or cat /sys/module/kvm_amd/parameters/nested

and if the relevant command returns a 1 then you can enable the function by creating the file or editing it to include the option you need eg:

sudo nano /etc/modprobe.d/kvm.conf

and add either of these two lines depending on CPU:

options kvm_intel nested=1 or options kvm_amd nested=1

Obviously after enabling nested vm support you will need to reboot for it to take effect.

Passthrough Hardware

Next thing we like to tinker with in the ability to pass bits of hardware to the VM so that the VM can use it directly. While it means the host cannot use the hardware passed anymore, it does give the VM direct access to the hardware which has performance benefits, such as passing a GPU to a VM so it can do some encoding using the GPU. While performance is not 100% that of what it is like to run bear metal, its not bad probably like 97% as good and way better than emulation.

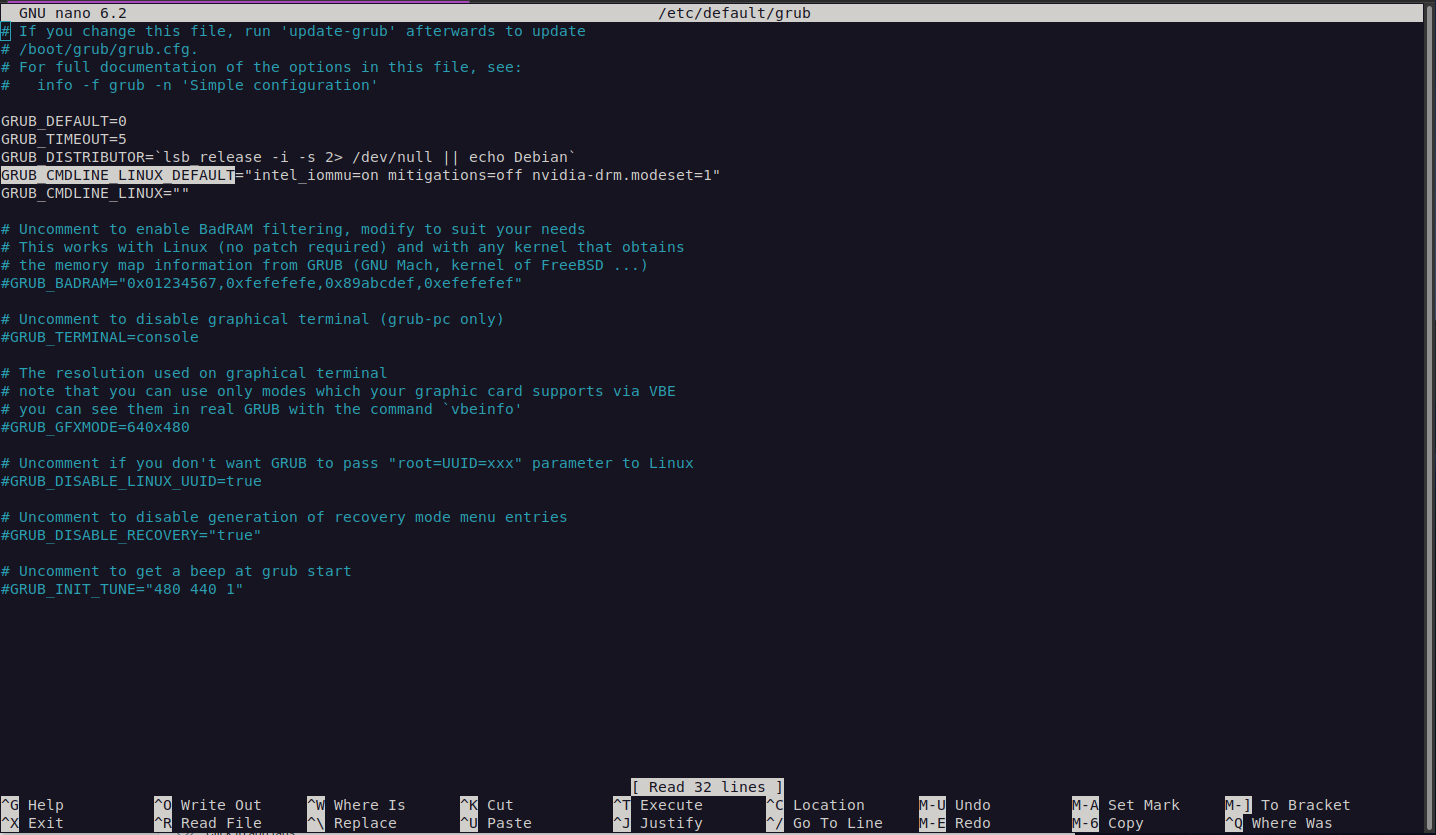

To enable this we just need to edit grub:

sudo nano /etc/default/grub

We want to add either intel_iommu=on or amd_iommu=on to the GRUB_CMDLINE_LINUX_DEFAULT line. eg: here is one on a test machine I am using:

You will see that on that line as I have an intel chip I have added the intel_iommu=on parameter onto that line. I also have a couple other parameters such as mitigations=off and nvidia-drm.modeset=1 but these should not be added as they are generally not required and decrease the security of the system. Im simply showing you what I had on a test system so you can see where to make modifications that you may need.

Once we have added this and closed and saved the file when finished with nano we can move onto the next step.

KVM optimizations

So we are already getting some more ram out of the system using some zram but in some cases this wont be super useful (although you can also use both or some hybrid of the two), and another option to optimize might be to deduplicate identical bits of memory to further save RAM. This can be done using the ksmd service so lets configure this and see what to change to get some semi good values.

So two things we are interested are files (everything is a file in Linux) '/sys/kernel/mm/ksm/run' and '/sys/kernel/mm/ksm/pages_to_scan'.

The first file turn on or off the feature, while the second changes the amount of pages it will be scanning every 20ms (by default). So I am interested in being able to find values for this file that are reasonable. To enable the feature we can use

sudo su echo 1 > /sys/kernel/mm/ksm/run

And by default it will scan 100 pages every 20ms

cat /sys/kernel/mm/ksm/pages_to_scan

100 is not a super useful value as it is a little under 400KB or 19.5MB per second or around 1GB per minute. This might sound like a lot but if you have 128GB of RAM assigned to VM's then for it to scan all the way through the RAM would take about 2 hours to do one complete scan through your RAM. While this will eventually get through everything it can be more efficient to find a slightly more reasonable value.

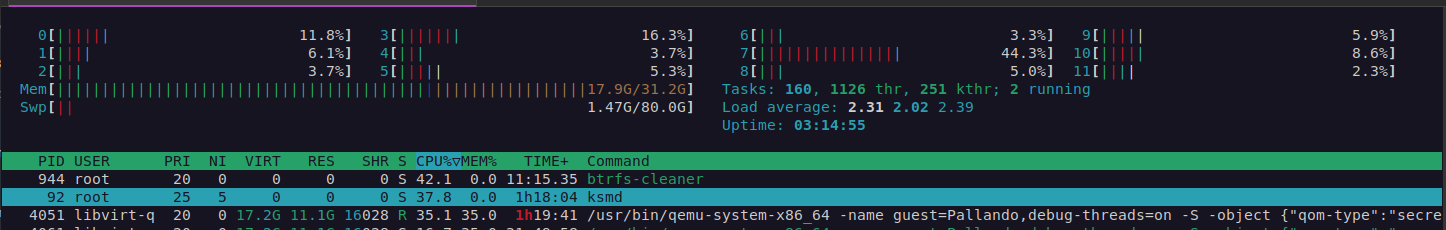

The cost of this is that the CPU will be more taxed. The ksmd service appears to be single threaded so it will use 1 core of your available CPU cores only, so once it reaches 100%, increasing the value will make it less efficient as you are asking it to do more than the CPU can keep up with. During my testing I have found that a value of CPU at 40% (on a single core) is alright if you have a lot of spare cores, or perhaps less if you dont have a large number of free cpu cores. I have 12 on my system I tested from so giving 1 core up for better memory management seemed like an alright trade off (leaving it running at 40% all the time). However I did feel this was a little on the high side and would be happy with a lower value as well. You can experiment yourself based on what I will show you here but probably a good low value would be around 10% CPU (for servers with high uptime etc that can over several hours normalize memory), a mid value around 25% and a high value around 40% (more agressive for desktop pc that spins up vm's for a lab then turns them off after a couple hours.). The higher the value the more the processor will scale and use power, cooling to compensate etc.

To check what is reasonable to you just increase the pages_to_scan value eg:

echo 4096 > /sys/kernel/mm/ksm/pages_to_scan

On my system a value of 4096 is 16 megabytes every cycle or 800MB a second (4096*4/1024 = 16 and the default sleep is every 20 milliseconds or 50 times a second). Thus every minute 46GB is being scanned and deduplicated if possible. On a modern system with lots of RAM getting through all the RAM in around 15 minutes would be good, so that you are checking the RAM can be optimized again and again. As its not uncommon for systems with around 256GB of RAM these days this value seems fairly reasonable to me at this time. Mine is probably a little high but the final optimization I leave to you, try aim to keep CPU under 40% and the amount of time around 15 minutes or so. That way when you fire up a VM, 15 minutes later some of the RAM can be claimed back. Setting this value too high is detrimental so try experiment to find the lowest value that is reasonable. There is no point scanning so quickly that everything is done every minute for example, try think of a reasonable goal like getting through the RAM in 15 minutes and work to find the lowest value that hits that. My test value above is high and not something I would use unless I was testing to see what I saved quickly just to benchmark if it was worth it at all. Also not my system I was testing on was a desktop not a server. As mentioned a server would want a low value of CPU consumed like 10% or under. Setting this value high is detrimental to performance in terms of CPU so on a server where you can take a long time and let it optimize over many hours there is no benefit to an aggressive value.

Here is htop showing the CPU being taxed:

If you dont see the ksmd process check that htop does not have “hide kernel threads” set in the setup.

For example after running 2 windows VM's for a short while I can check how much actual memory is saved by

cat /sys/kernel/mm/ksm/pages_shared

My value was 311881 pages which is 311881*4/1024 = 1218MB or about 1GB of memory saved (both VMs together were using 22GB). If I fired up more VM's I would continue to save a bit over each VM. its not massive and does tax the CPU but its something and when used in conjunction with other optimisations can help. I only got 1/22 or about 5% RAM reclaimed from this basic test and had to give up a CPU core running at 40% all the time.

To make the changes permanent, as they are lost on reboot we will need to make a startup script. So once you have experimented and found values you like, then you can move onto doing this. The final value I ended up choosing was 1024 pages each scan so this will be reflected below in the example.

First I make a script in a directory of my choosing. In my case I created a directory on my root /Scripts and added a file in there.

For example:

sudo mkdir /Scripts sudo nano /Scripts/KSMScriptRun.sh

Then added the following to the script:

#!/bin/bash echo 1 > /sys/kernel/mm/ksm/run echo 1024 > /sys/kernel/mm/ksm/pages_to_scan

I then made it executable:

sudo chmod +x /Scripts/KSMScriptRun.sh

Now I need to tell the system to run this script when the system boots. You can do this by creating a systemd unit under the relevant location:

sudo nano /etc/systemd/system/KSMScriptPete.service

Next fill in the relevant info in the file:

[Unit] Description=Enable KSM same page merging at boot After=default.target [Service] ExecStart=/Scripts/KSMScriptRun.sh [Install] WantedBy=default.target

Next enable the script:

sudo systemctl enable KSMScriptPete.service

If you reboot you should find that the values you were testing with above are already filled by your script everytime the system reboots without you having to manually edit the files each time you turn on the box :)

That is pretty much all the optimisations I currently use that dont cause me any issues and how I get to the values, however let me know if you have any others :)

How do you actually make a VM

It dawned on me after typing all of the above that there might actually be people that dont know how to use KVM so I thought I would type up a really fast how to here. So I kind of assumed that people had already installed but if not you do that by:

sudo apt-get install virt-manager

I did go and add this in the beginning of the guide in case you didn't know but it does not address how to actually use it.

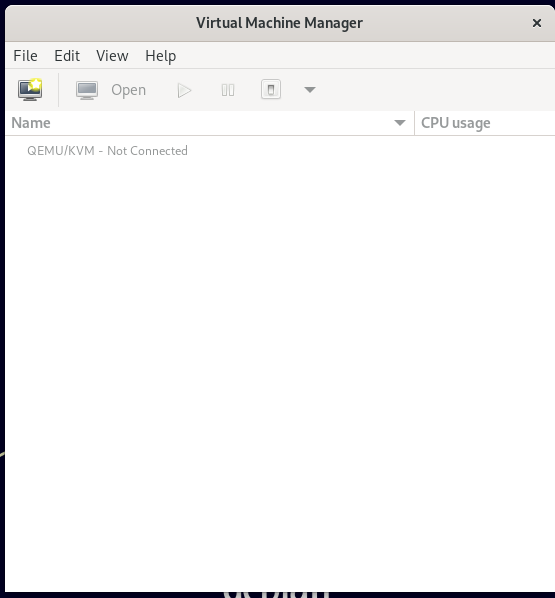

After installing you will want to give the box a reboot and then run virt-manager. It will also be in the apps menu.

By default Debian (not Ubuntu) asks for your password when you open it. If you don't like this you can add your account to the relevant groups:

sudo usermod -a -G libvirt $(whoami) sudo usermod -a -G kvm $(whoami)

But if you don't mind you can authenticate each time also. Either option works.

The first screen is very blank if you haven't used it before:

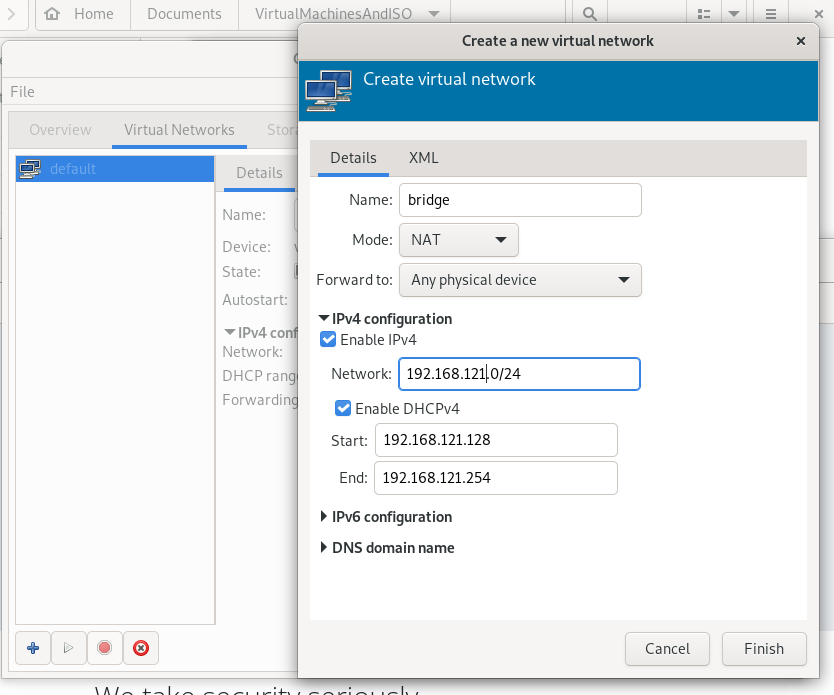

The first thing we should do is define where we want to place machines, and the second thing is create a network for them. I recommend using a logical location for disk images/ISO images in your home, and a macvtap for the network as it prevents communication to the host which is safer. For temporary connunication we can create a bridge then unplug the bridge when not using it. This should be a general setup for like 99% of machines and will get you going so let me show this and you can fiddle around with other more complex setups yourself.

Oh and download an ISO as a test also. For this I downloded the fedora iso image for fun.

In my screenshot above you can see I am “not connected”. If this happens just right click on the QEMU/KVM line and choose connect. Its no big deal its just being silly. Next choose edit and connection details. We want to make a new virtual network here so lets make our own. I want a bridge network. The 'default' network is actually already a bridge on 192.168.122.0/24 range but in case you didn't know the way to create one is to just click the + and fill in details as such:

When you finish it will have autostart and active enabled. I will use this one (virbr1) going forward but you could use the default on if you wanted. Its just nice to know how to make your own and understand all steps if need be.

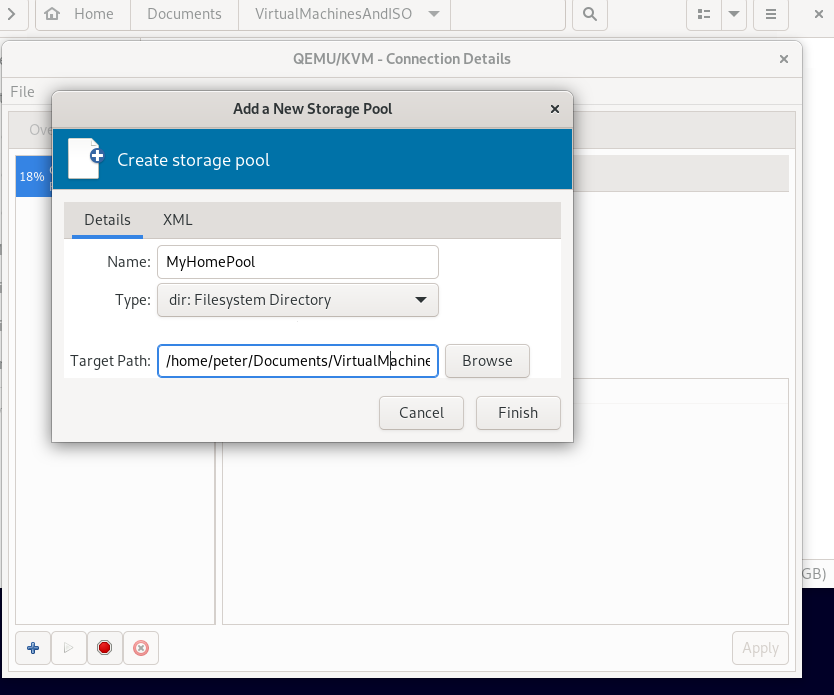

Next lets define our storage area. Click on the storage tab and instead of the default location we will create a new one. I have a folder in my home already with the Fedora ISO in it I will be using.

Click the + and give the pool a name like “MyHomePool” or something and browse to the folder location as such:

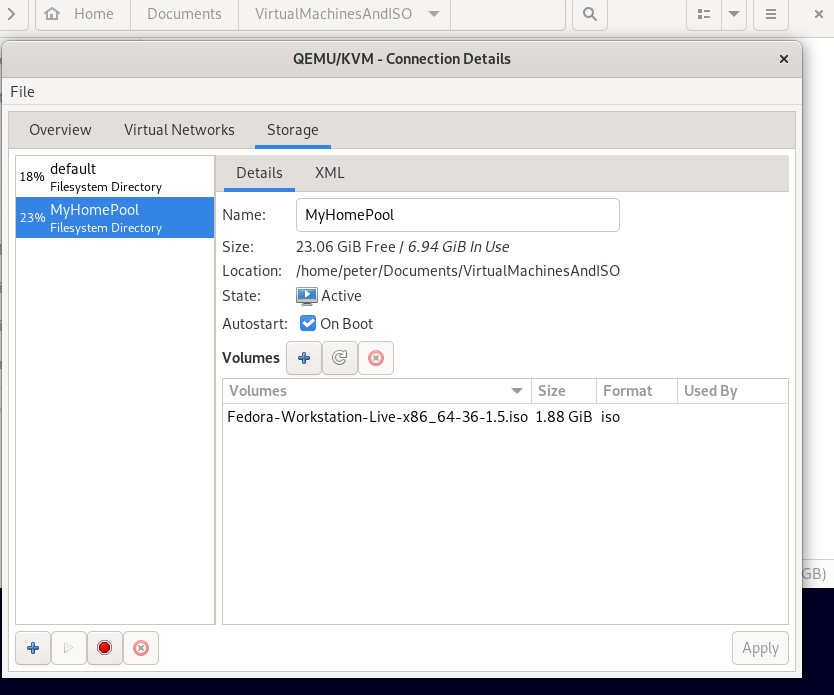

Click finish. If you select that pool you will see whatever files are in there listed and it will be active and enabled on boot:

Now we are ready to make a VM. As I mentioned the general kind of setup most people want (or me at least) is a VM that is on the same local LAN as other computers in their network. You can obviously not do this and put it on its own private lan if you like. However its generally a good idea to not have the VM guests also communicate with the host as a compromised VM then can try compromise the host and by extension all VM's running on it. However you can setup things exactly how you feel they should be as you go on after this simple tutorial. For us we will have the VM on the local lan but separate from the host, and have a bridge that we turn on or off if we want to communicate with it temporarily. This is pretty much a generalized blend of usability and functionality and security while you learn the ins and outs of VM management.

So click File - New Virtual Machine and on the first screen choose Local Install. Browse for the ISO on the next screen by selecting your pool and the ISO in question. The installer should detect what you are installing but you can search the bottom dialogue for your OS if need be or choose a generic option. On the next screen give it some CPU and Memory as you feel appropriate and choose next.

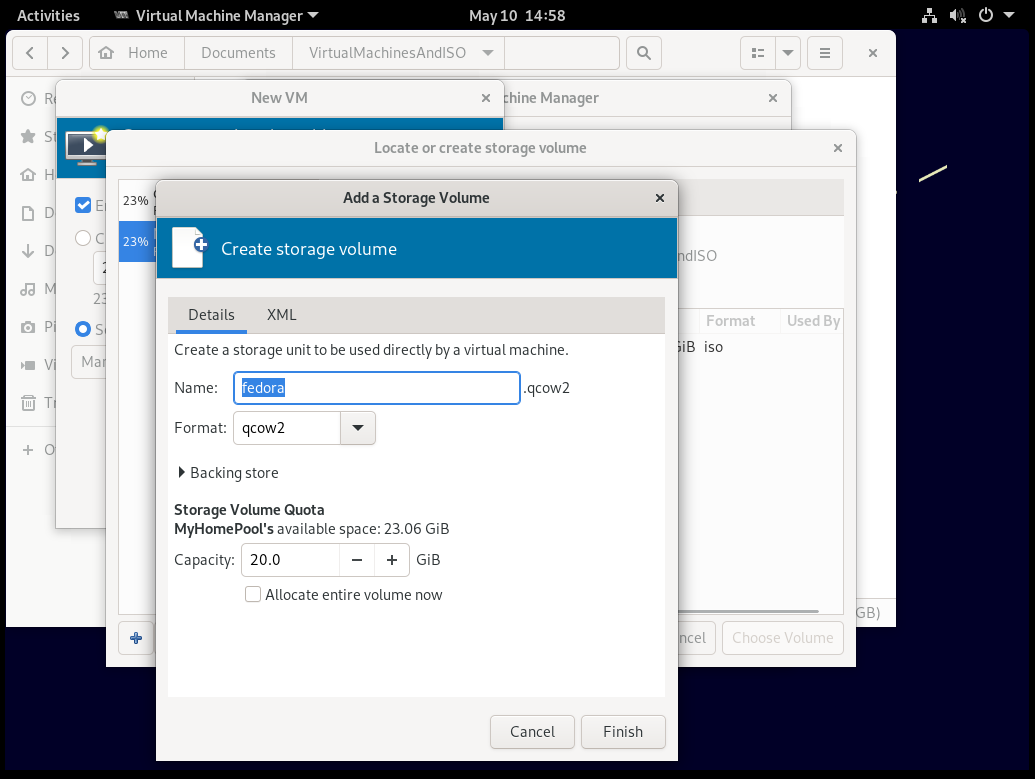

On the next screen I choose “select or create custom storage” and then click “manage”. I then click on my pool and click the + so that on the next screen i can give the disk image a name in the location I wanted:

As you can see I created a small disk image in my home location I wanted. Click finish and the disk image should appear and you can click choose volume after selecting it so that this is where the OS will be installed.

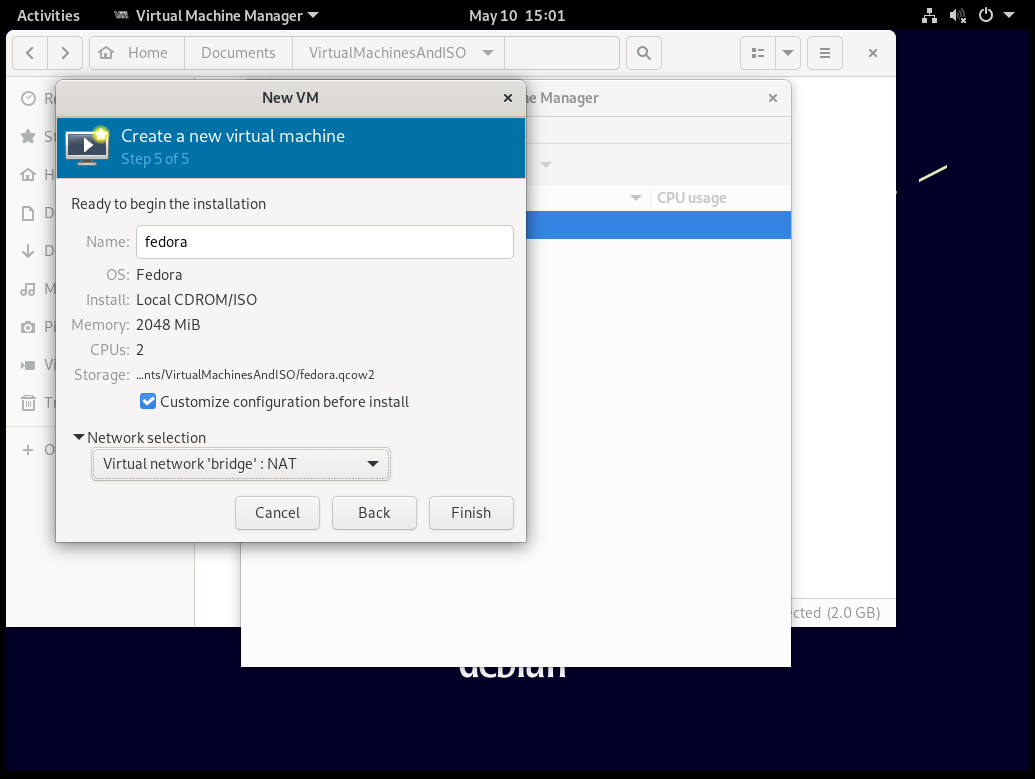

Back on the New VM screen you can click forward and then tick the box that says “customize configuration before install” so we can add our specific networking. Under the Networking selection you can change the 'default' to the 'bridge' network we made earlier and click finish:

On the customization screen you can fiddle around if you want but in this tutorial we are just interested in creating a new network. The current NIC is already the bridge which will facilitate communication to the host machine and provide the VM internet if need be but I want to also add a macvtap adapter so it can be on my local lan, and communicate with devices on my lan such as my NAS or things like that.

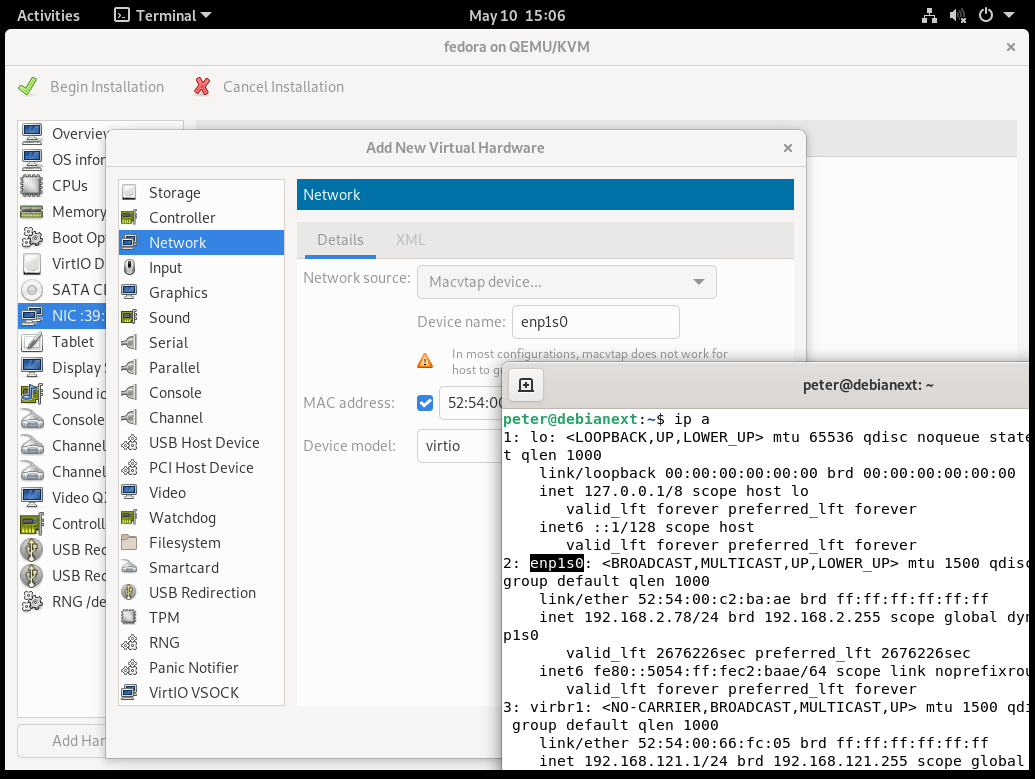

So choose “add hardware” and choose “network”. On the settings of the network we want Macvtap device and under device name you will choose the appropriate device of your Host machines network (ie when you use the 'ip a' command:

I have highlighted the network name on the host machine in the terminal which is on my local lan 192.168.2.x range above. Hopefully this makes sense. You are telling it what network to place that device on with the identifier you type there.

You can then click finish and “begin installation” At any rate once you are done you will be able to install your VM of choice and have in this case 2 networks - the VM on your local LAN which can communicate with all pc's on your lan except the host machine and a bridge which can allow communication between the host and the VM if need be temporarily.

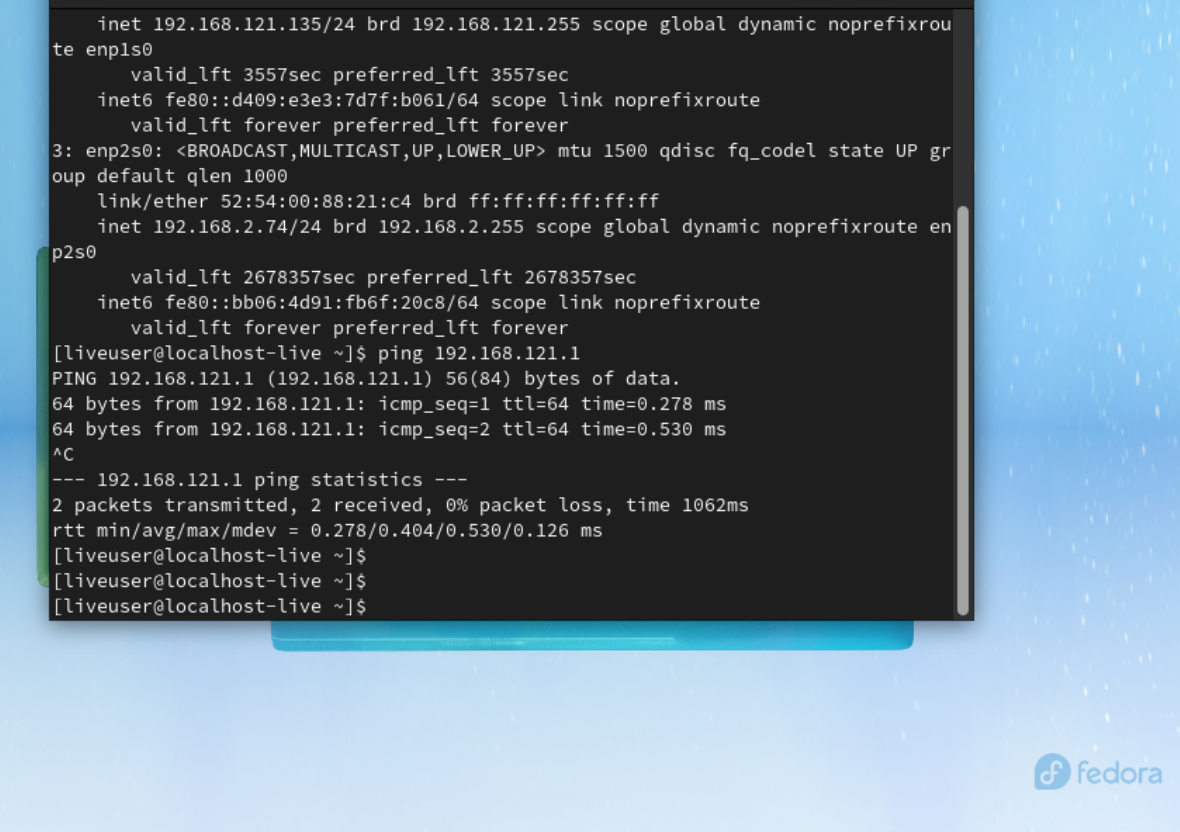

Here we can see the networks and I ping the host (on .1) (and it also got an ip of 192.168.2.74 on my LAN):

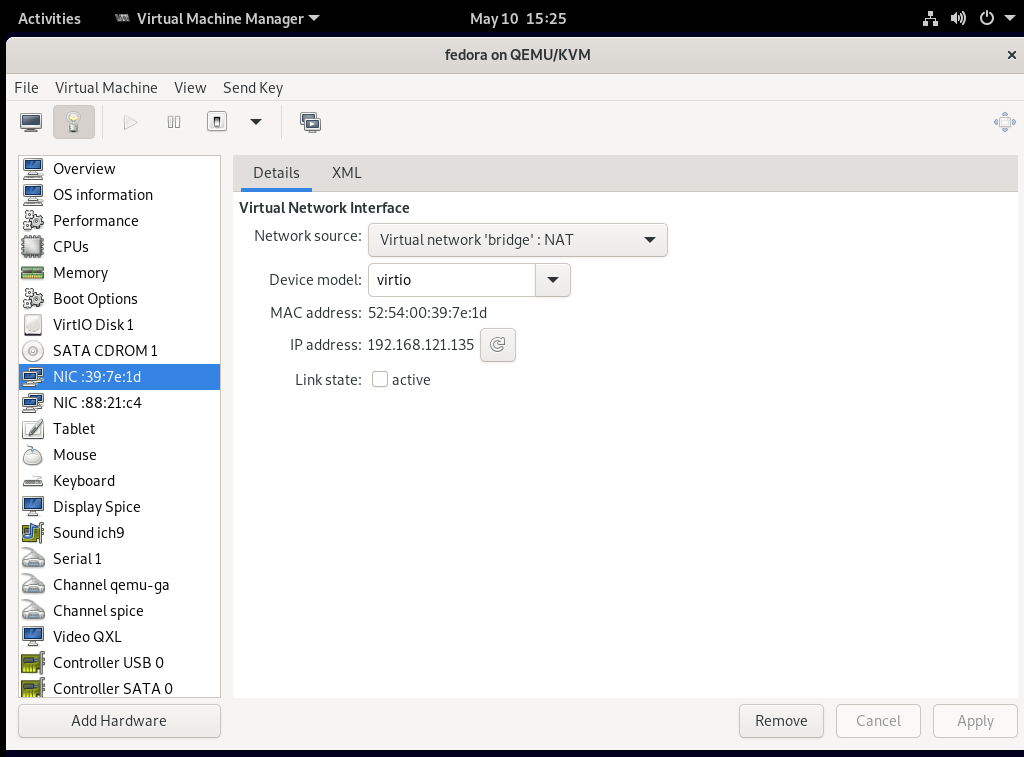

Now what you can do is when you are done with the bridge click on the light bulb and just choose the bridge network and untick 'active' and then 'apply'. This is the same as unplugging a cable when you dont need the bridge. You can see that you wont have a connection to that network anymore on the guest (ip a command).

Thats the basics, good luck :)

Notes

Any VM with the following in the XML will not use the ksmd optimizations:

<memoryBacking> <nosharepages/> </memoryBacking>

Adding that can exclude certain VM's from being memory optimized.

For guest VM's dont forget to install the guest additions on the VM. In windows guet VMs you have to download them but on linux guests you can install them by:

sudo apt-get install qemu-guest-agent spice-vdagent

If you dont install them the guests tend to run sub optimally and slower.

Notes

Notes:

Suggested value for pages to scan is:

echo 1024 > /sys/kernel/mm/ksm/pages_to_scan

Works well most systems.